Primer on Computer#

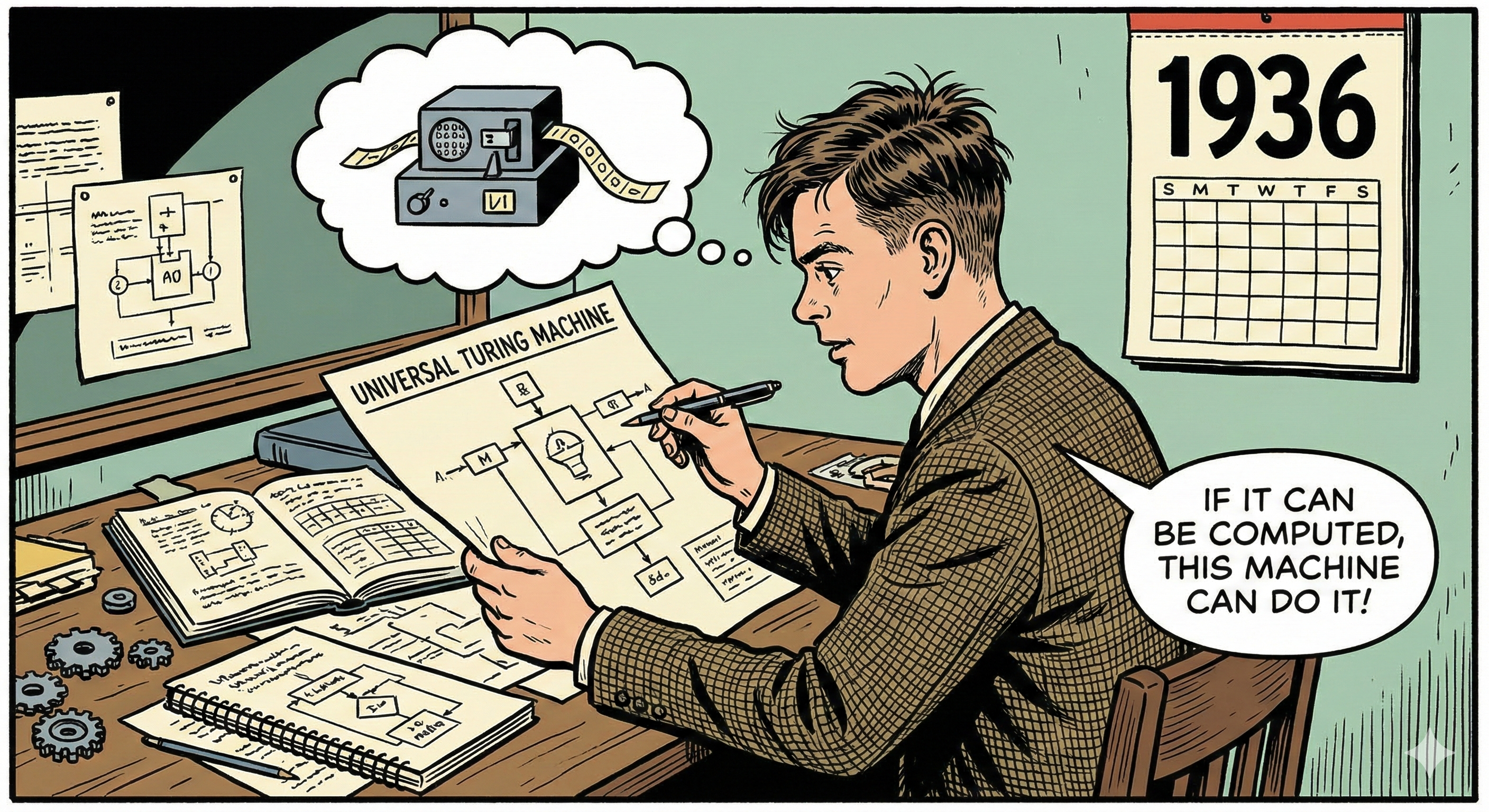

Universal Turing Machine#

The Turing machine is a conceptual model of a machine that can, by manipulation of a memory tape according to a fixed table of rules, implement an arbitrary algorithm. The memory tape is a linear sequence of symbols that can be read and altered by the machine, following the table of rules. While the length of the memory tape might need to be infinite, the set of symbols it can contain, i.e. the alphabet of the machine must be finite.

The Universal Turing Machine (UTM) is a Turing Machine with a fixed table of rules that can, given right memory tape, mimic any Turing Machine and thus can implement any algorithm simply by providing it with the right memory tape.

Consequentially, given an UTM, any algorithm or program can be implemented “simply” by delivering a sequence of instructions (i.e. the memory tape). This makes an UTM a universal device for algorithmic operations that does, once created, not need to be modified ever again, even when its use-case changes. With an UTM, the process of building an arbitrary program becomes a purely logical challenge: Determine the correct set of instructions to feed to an UTM.

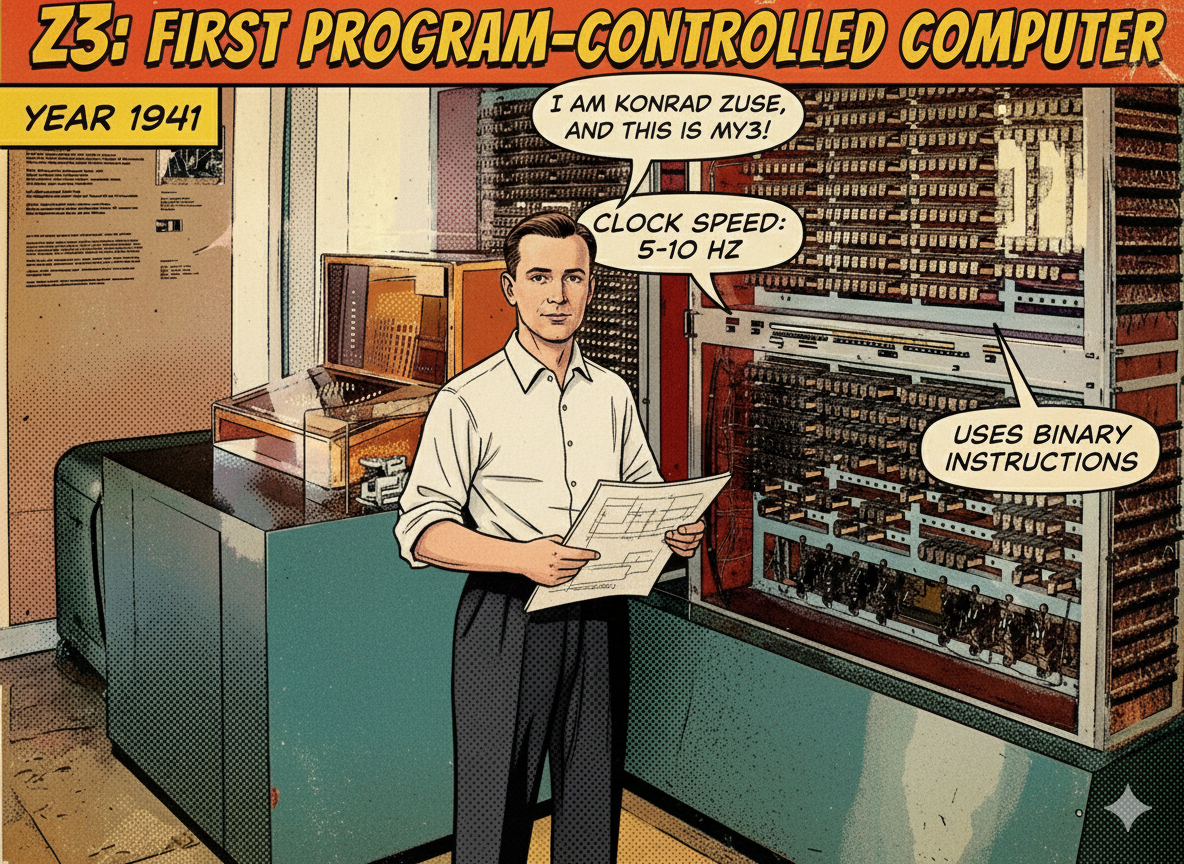

Z3 Computer#

The Z3, completed by Konrad Zuse in 1941, was the world’s first working, programmable, and fully automatic digital computer. It was built using approximately 2,500 electromechanical relays that operated at a clock speed of 5–10 Hz. It was a pioneering machine that used binary representation (floating-point numbers) rather than the decimal systems favored by many of its contemporaries.

While it was originally designed to solve specific engineering problems like wing flutter in aircraft, it was later proven to be, in theory, a Universal Turing Machine. This means that despite its mechanical nature and limited speed, the Z3 possessed the fundamental logical flexibility to implement any computable algorithm, provided it was given a long enough instruction tape.

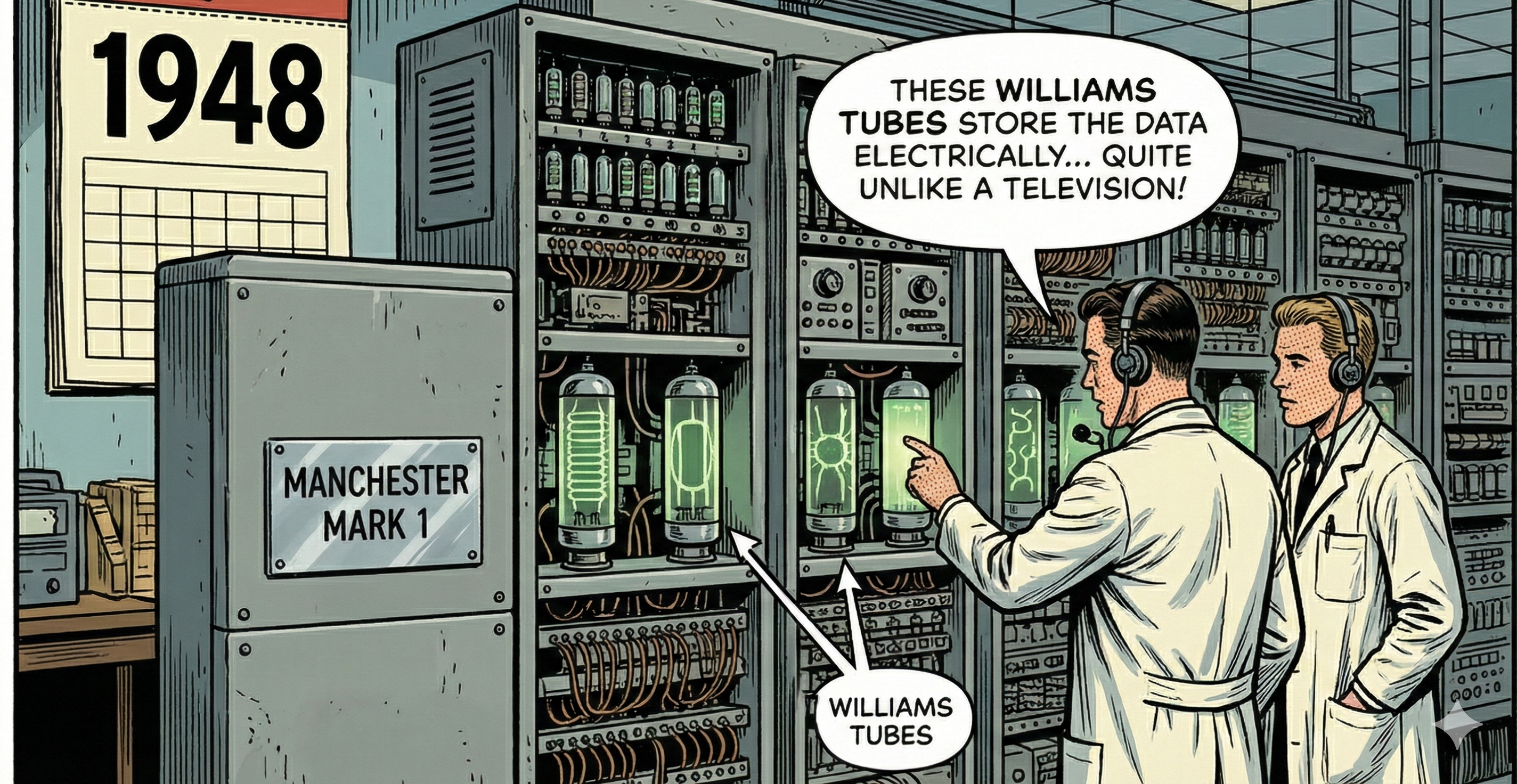

The Stored-program Computer#

Unlike earlier machines that read instructions from external perforated tape or plugboards, the Mark 1 used Williams tubes (modified cathode-ray tubes) to store both the program and the data as patterns of charges on the screen. This “stored-program” architecture meant the machine could modify its own instructions at electronic speeds, effectively functioning as a high-speed Universal Turing Machine. This internal storage eliminated the mechanical bottleneck of physical tapes, transforming the computer from a fast calculator into a truly flexible, programmable processor.

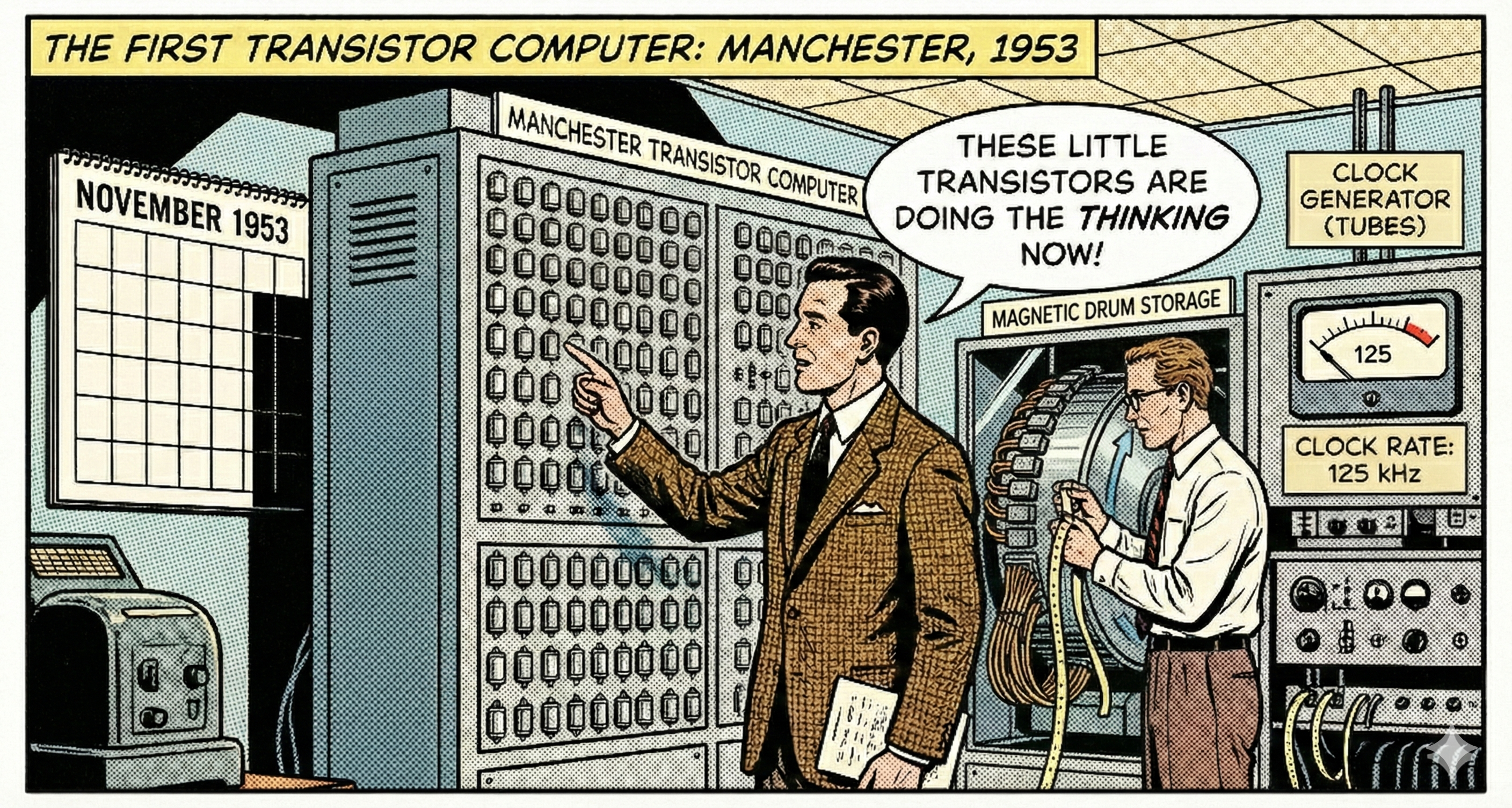

The Transistor Computer#

The Manchester Transistor Computer (TC), first operational in November 1953, marked a pivotal shift in hardware engineering as the world’s first computer driven primarily by transistors rather than vacuum tubes. While much smaller, cooler, and more reliable than its valve-based predecessors, it was a hybrid transitional machine.

It used point-contact transistors (later replaced by more reliable junction transistors) for its logic circuits and relied on a magnetic drum for storage and, initially, a few vacuum tubes to generate its clock signal. Running at a clock rate of approximately 125 kHz, its primary historical significance was proving that solid-state technology was viable for building a Universal Turing Machine, setting the stage for the miniaturization revolution that followed.

Integrated Circuits & MOSFET#

An Integrated Circuit (IC) is a set of electronic circuits—including transistors, resistors, and capacitors—manufactured together on a single piece of semiconductor material (usually silicon), allowing complex functions to be performed by a compact chip rather than a board of individual components.

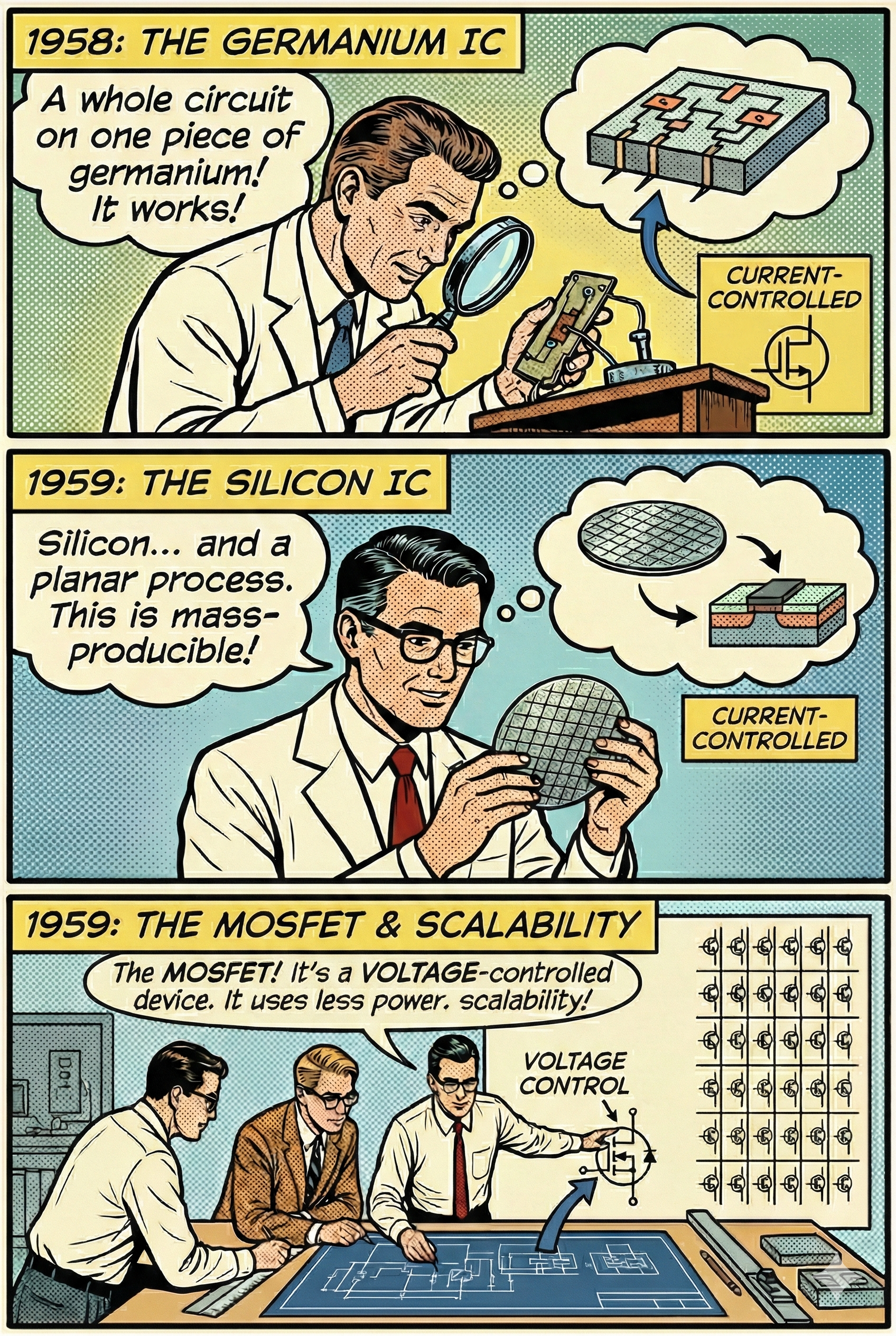

The IC was invented both by Jack Kilby in 1958, using Germanium as semiconductor plate and by Robert Noyce in 1959, with a Silicon chip and by printing connections directly onto the chip.

This “planar process” was suitable for mass production and is the ancestor of how modern chips are produced.

Both ICs used current driven Bipolar Junction transistors (BJT) which were state of the art at that time.

At the same time, Mohamed Atalla and Dawon Kahng realized the first field-effect transistor, inventing the metal-oxide-semiconductor field-effect transistor (MOSFET). MOSFETs are voltage based transistors consuming significantly less energy than BJTs while being much more scalable.

The combination of Noyce’s planar IC design and MOSFETs laid the foundation of modern computer chips.

Microprocessors#

A Microprocessor is a computer processor where the data processing logic and control is included on a single integrated circuit (IC), effectively miniaturizing the Central Processing Unit (CPU) of a computer onto a single chip.

The MP944, developed by Garrett AiResearch for the U.S. Navy’s F-14 Tomcat fighter jet, technically stands as the world’s first microprocessor chipset, entering production in 1970. Unlike modern microprocessors, it distributed its functions across six separate chips. However, because it was a highly classified military project, its existence remained a secret until the declassification of the F-14’s design in 1998.

Consequently, the Intel 4004, released in 1971, clocking at ~740kHz, is recognized to be the first commercially available single-chip microprocessor, effectively launching the personal computing revolution.

From a practical point of view, a microprocessor can be considered a Universal Turing Machine implemented on a silicon chip; given the right input, a microprocessor is capable of carrying out any algorithmic process.

The scaling laws#

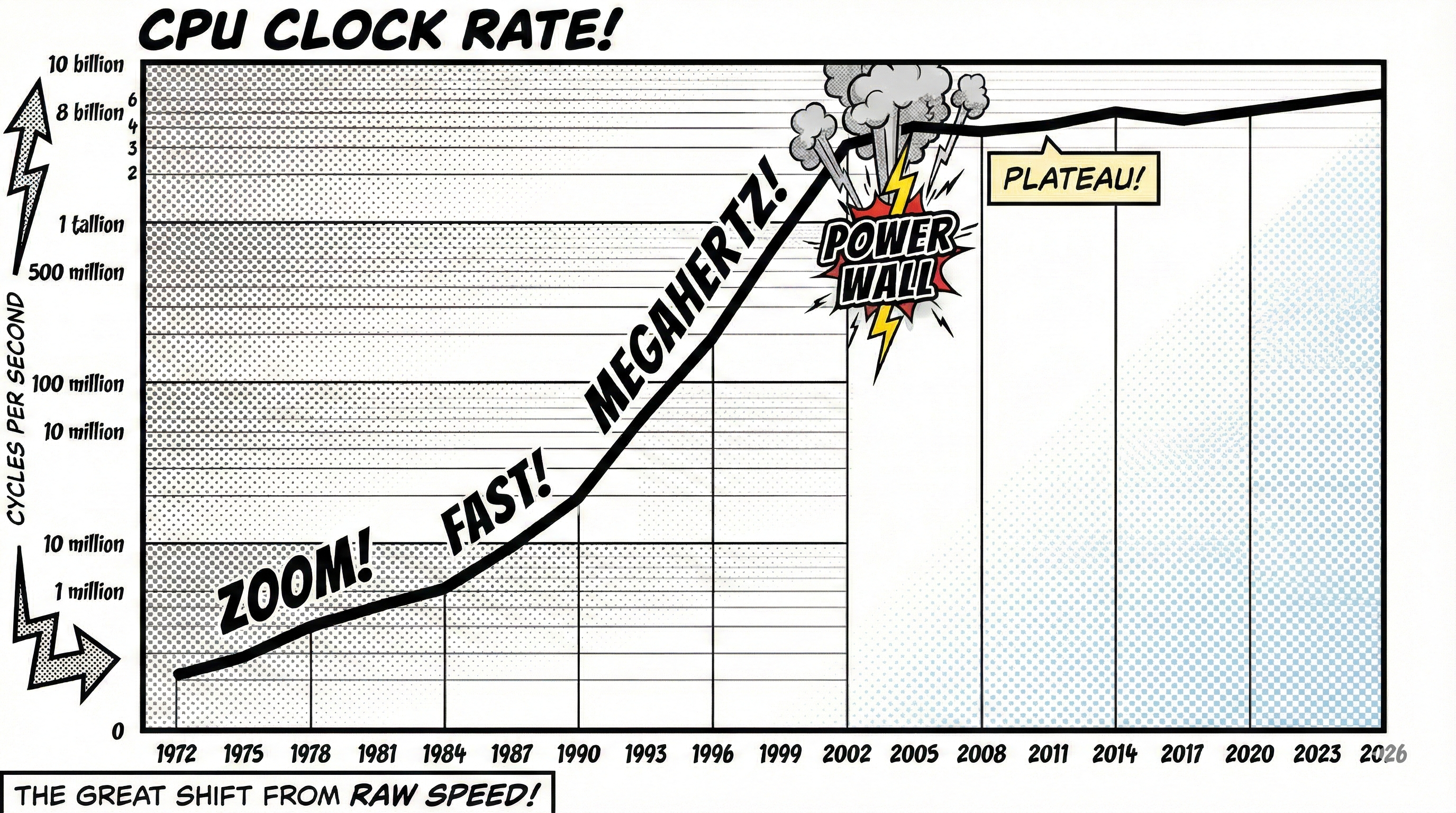

Moore’s Law, formulated by Gordon Moore in 1965, is the observation that the number of transistors on a microchip doubles approximately every two years. This prediction set the pace for the entire semiconductor industry, driving a relentless pursuit of miniaturization that allowed engineers to pack exponentially more computing power into the same amount of space.

Complementing this was Dennard Scaling, formulated by Robert Dennard in 1974. Dennard observed that as transistors get smaller, their power density stays constant, so that power use stays in proportion with area. This critical realization meant that shrinking transistors didn’t just save space; it also allowed them to switch faster while using less energy, preventing the chip from overheating even as the transistor count skyrocketed.

Together, these two principles fueled the “Golden Age” of computing, ensuring that for decades, computers became consistently faster, smaller, and cheaper all at the same time. In addition to the speed gain, smaller transistors also allowed for more transistors on the same surface area, leading to a double benefit. With the increasing number of transistors a redesigning diversification of the CPU started, allowing for instruction level parallelism, on-chip memory (cache) and dedicated circuits for specialized tasks, such as video and audio data processing.

Physical limits#

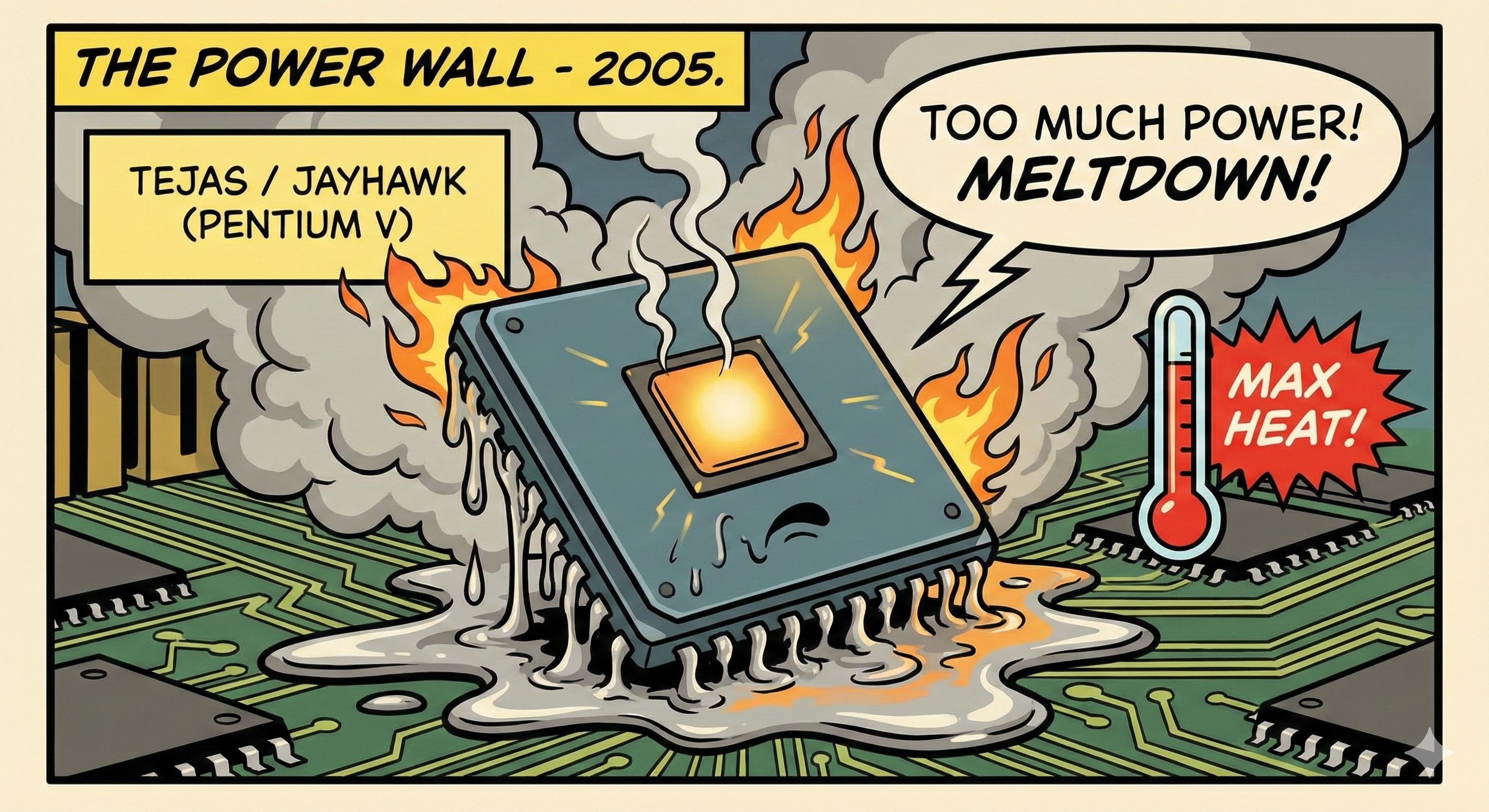

In practice, leakage current and threshold voltage do not scale down perfectly when shrinking transistor dimensions. Eventually, this leads to a sharp increase in power density. This creates a paradox where making the chip smaller actually generates more intense heat per square millimeter, making it physically impossible to cool with standard methods.

The practical limits were reached around 2004, famously exemplified by the cancellation of Intel’s Tejas and Jayhawk projects (intended to be the Pentium V). This moment became widely known hitting the “Power Wall”. It marked the abrupt end of the “Megahertz Arms Race”.

The Power Wall#

Since ~2005, Dennard scaling has broken down in practice. While the transistor count in integrated circuits continued to grow (and their size continued to decrease), their clock frequency stagnated. At this scale, it is simply no longer possible to run transistors at their maximum potential speed due to thermal limits. The era of speed gain through simple transistor scaling was over.

Sources:

https://en.wikipedia.org/wiki/Computer

https://en.wikipedia.org/wiki/Universal_Turing_machine

https://en.wikipedia.org/wiki/Z3_(computer)#Z3_as_a_universal_Turing_machine

https://en.wikipedia.org/wiki/Manchester_Mark_1

https://en.wikipedia.org/wiki/Integrated_circuit

https://en.wikipedia.org/wiki/Mohamed_Atalla

https://en.wikipedia.org/wiki/F-14_CADC

https://en.wikipedia.org/wiki/Intel_4004

https://en.wikipedia.org/wiki/Central_processing_unit

https://en.wikipedia.org/wiki/Limits_of_computation

https://en.wikipedia.org/wiki/Moore’s_law

https://en.wikipedia.org/wiki/Dennard_scaling

https://en.wikipedia.org/wiki/Tejas_and_jayhawk